Facial recognition's 'dirty little secret': Millions of online photos scraped without consent

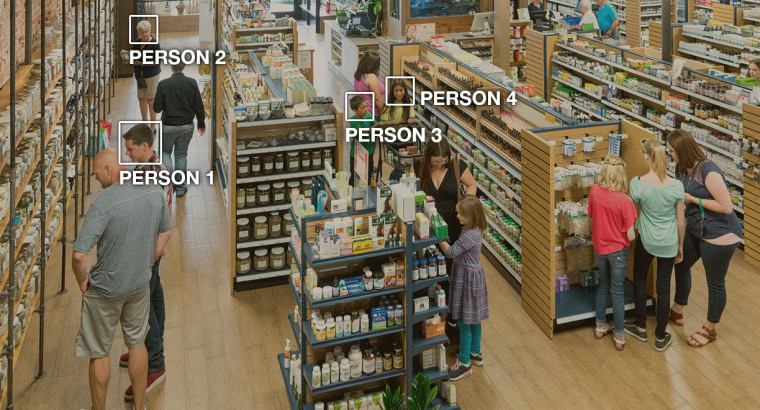

Facial recognition can log you into your iPhone, track criminals through crowds and identify loyal customers in stores.

The technology — which is imperfect but improving rapidly — is based on algorithms that learn how to recognize human faces and the hundreds of ways in which each one is unique.

To do this well, the algorithms must be fed hundreds of thousands of images of a diverse array of faces. Increasingly, those photos are coming from the internet, where they’re swept up by the millions without the knowledge of the people who posted them, categorized by age, gender, skin tone and dozens of other metrics, and shared with researchers at universities and companies.

As the algorithms get more advanced — meaning they are better able to identify women and people of color, a task they have historically struggled with — legal experts and civil rights advocates are sounding the alarm on researchers’ use of photos of ordinary people. These people’s faces are being used without their consent, in order to power technology that could eventually be used to surveil them.

That’s a particular concern for minorities who could be profiled and targeted, the experts and advocates say.

“This is the dirty little secret of AI training sets. Researchers often just grab whatever images are available in the wild,” said NYU School of Law professor Jason Schultz.

The latest company to enter this territory was IBM, which in January released a collection of nearly a million photos that were taken from the photo hosting site Flickr and coded to describe the subjects’ appearance. IBM promoted the collection to researchers as a progressive step toward reducing bias in facial recognition.

But some of the photographers whose images were included in IBM’s dataset were surprised and disconcerted when NBC News told them that their photographs had been annotated with details including facial geometry and skin tone and may be used to develop facial recognition algorithms. (NBC News obtained IBM’s dataset from a source after the company declined to share it, saying it could be used only by academic or corporate research groups.)

“None of the people I photographed had any idea their images were being used in this way,” said Greg Peverill-Conti, a Boston-based public relations executive who has more than 700 photos in IBM’s collection, known as a “training dataset.”

“It seems a little sketchy that IBM can use these pictures without saying anything to anybody,” he said.

John Smith, who oversees AI research at IBM, said that the company was committed to “protecting the privacy of individuals” and “will work with anyone who requests a URL to be removed from the dataset.”

Despite IBM’s assurances that Flickr users can opt out of the database, NBC News discovered that it’s almost impossible to get photos removed. IBM requires photographers to email links to photos they want removed, but the company has not publicly shared the list of Flickr users and photos included in the dataset, so there is no easy way of finding out whose photos are included. IBM did not respond to questions about this process.

To see if your Flickr photos are part of the dataset, enter your username in a tool NBC News created based on the IBM dataset:

IBM says that its dataset is designed to help academic researchers make facial recognition technology fairer. The company is not alone in using publicly available photos on the internet in this way. Dozens of other research organizations have collected photos for training facial recognition systems, and many of the larger, more recent collections have been scraped from the web.

Some experts and activists argue that this is not just an infringement on the privacy of the millions of people whose images have been swept up — it also raises broader concerns about the improvement of facial recognition technology, and the fear that it will be used by law enforcement agencies to disproportionately target minorities.

“People gave their consent to sharing their photos in a different internet ecosystem,” said Meredith Whittaker, co-director of the AI Now Institute, which studies the social implications of artificial intelligence. “Now they are being unwillingly or unknowingly cast in the training of systems that could potentially be used in oppressive ways against their communities.”

HOW FACIAL RECOGNITION HAS EVOLVED

In the early days of building facial recognition tools, researchers paid people to come to their labs, sign consent forms and have their photo taken in different poses and lighting conditions. Because this was expensive and time consuming, early datasets were limited to a few hundred subjects.

With the rise of the web during the 2000s, researchers suddenly had access to millions of photos of people.

Amazon Rekognition enables users to track people through a video even when their faces are not visible. Amazon

Amazon Rekognition enables users to track people through a video even when their faces are not visible. Amazon

“They would go into a search engine, type in the name of a famous person and download all of the images,” said P. Jonathon Phillips , who collects datasets for measuring the performance of face recognition algorithms for the National Institute of Standards and Technology. “At the start these tended to be famous people, celebrities, actors and sports people.”

As social media and user-generated content took over, photos of regular people were increasingly available. Researchers treated this as a free-for-all, scraping faces from YouTube videos, Facebook, Google Images, Wikipedia and mugshot databases.

Academics often appeal to the noncommercial nature of their work to bypass questions of copyright. Flickr became an appealing resource for facial recognition researchers because many users published their images under “Creative Commons” licenses, which means that others can reuse their pictures without paying license fees. Some of these licenses allow commercial use.

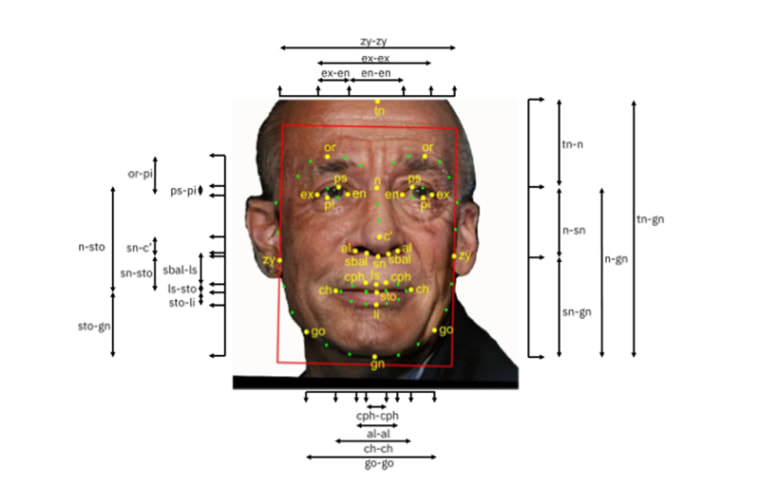

To build its Diversity in Faces dataset, IBM says it drew upon a collection of 100 million images published with Creative Commons licenses that Flickr’s owner, Yahoo, released as a batch for researchers to download in 2014 . IBM narrowed that dataset down to about 1 million photos of faces that have each been annotated, using automated coding and human estimates, with almost 200 values for details such as measurements of facial features, pose, skin tone and estimated age and gender, according to the dataset obtained by NBC News.

IBM calculated dozens of measurements for every face included in the dataset. IBM

IBM calculated dozens of measurements for every face included in the dataset. IBM

It’s a single case study in a sea of datasets taken from the web. According to Google Scholar, hundreds of academic papers have been written on the back of these huge collections of photos — which have names like MegaFace , CelebFaces and Faces in the Wild — contributing to major leaps in the accuracy of facial recognition and analysis tools. It was difficult to find academics who would speak on the record about the origins of their training datasets; many have advanced their research using collections of images scraped from the web without explicit licensing or informed consent.

The researchers who built those datasets did not respond to requests for comment.

HOW IBM IS USING THE FACE DATABASE

IBM released its collection of annotated images to other researchers so that it can be used to develop “fairer” facial recognition systems. That means systems can more accurately identify people of all races, ages and genders.

“For the facial recognition systems to perform as desired, and the outcomes to become increasingly accurate, training data must be diverse and offer a breadth of coverage,” said IBM’s John Smith , in a blog post announcing the release of the data .

The dataset does not link the photos of people’s faces to their names, which means any system trained to use the photos would not be able to identify named individuals. But civil liberty advocates and tech ethics researchers have still questioned the motives of IBM, which has a history of selling surveillance tools that have been criticized for infringing on civil liberties.

For example, in the wake of the 9/11 attacks, the company sold technology to the New York City police department that allowed it to search CCTV feeds for people with particular skin tones or hair color . IBM has also released an “intelligent video analytics” product that uses body camera surveillance to detect people by “ethnicity” tags, such as Asian, black or white.

IBM said in an email that the systems are “not inherently discriminatory,” but added: “We believe that both the developers of these systems and the organizations deploying them have a responsibility to work actively to mitigate bias. It’s the only way to ensure that AI systems will earn the trust of their users and the public. IBM fully accepts this responsibility and would not participate in work involving racial profiling.”

Today, the company sells a system called IBM Watson Visual Recognition, which IBM says can estimate the age and gender of people depicted in images and, with the right training data, can be used by clients to identify specific people from photos or videos .

NBC News asked IBM what training data IBM Watson used for its commercial facial recognition abilities, pointing to a company blog post that stated that Watson is “transparent about who trains our AI systems, what data was used to train those systems.” The company responded that it uses data “acquired from various sources” to train its AI models but does not disclose this data publicly to “protect our insights and intellectual property.”

Read more at the seeded content

Tags

Who is online

34 visitors

Is there any sense of privacy we should expect? I think not.

sorry ma'am, that's private .

Not if you want a drivers license or a state ID. They make sure you fit in the 'grid', no smiling.

Nope, especially if your putting photos of yourself out in public

These Companies and Researchers are just being Lazy by using these images without permission. When you look at it realistically even if you never post pictures of yourself there is only one way to prevent your image from being collected and used, wear a mask in public at all times. How many times is your picture taken when you shop at Walmart, if it's video every frame is a photo. There are camera's in the parking lot, at the entrance and exit, and throughout the store. When you put you credit card into the reader to pay you are on camera at multiple angles. It's not just Walmart it's everywhere. Also I've seen the image quality that even mid-range security camera's can deliver and I'm convinced that most of the surveillance footage we see on TV is being purposely degraded, maybe to give criminals a false sense of security or maybe they're afraid of public outcry if we realized the number of crystal clear, zoomable images of us were being taken everyday.

When the Patriot Act passed, it allowed the government to scrape the internet. They built two computer processing facilities at a cost of billions to completely scrape everything going thru.... Initially this was to track messages for terrorism intel.... but they have been expanding this ever since....

9,000+ secret Fisa warrants were issued from the super secret terrorism courts last year, only 8 of them had anything to do with terrorism..... (according to the last data released by DHS) Predominantly for drug trafficking cases....

CCTV cameras on every street corner, ostensibly to track traffic patterns, but we saw how they were used during the Boston Marathon bombing search.....

Facial recognition software got a huge boost from the government who went deep into it's development at the same time......

This is the result......

Privacy? 9/11 ended the right to privacy in America....

hey,

we actually agree on something

Tech companies have not only become proficient at facial recognition software but they've been using these "Throw-back Thursdays" for age progression software. People think it's so great to post old pictures of themselves...alongside current photos. (These are probably the same people who sent in their DNA to ancestry or 123andMe and expect privacy without knowing how that data might actually be used.)

When we are in public, or post photos on the web, should we expect privacy? No...that would be a stretch. Most stores have posted that they use video surveillance or have security cameras...and we all just walk right on in. We aren't going to do anything illegal...but that doesn't stop the cameras. There are programs that track your online activity so that they can present ads that would appeal to you. (That's what they say anyway) Those 'shoppers bonus' cards we use to save money tracks our purchases so that they can send coupons that we will use (shop here again please!)...and the security cams can tie your photo to your shopping habits.

Privacy? There's a different privacy now...9/11 and terrorist acts have necessitated that change. It isn't all bad though...Facial recognition comes in handy when searching for an alleged terrorist in a crowd...age progression helps find children years later. Video surveillance helps catch criminals...and so on.

this stuff should be banned

It is every where. I was at target the other day and every single self checkout lane had a camera and monitor.

Intersections have cameras taking video of what we drive, licence plate numbers and photo of driver.

I have had people show me pictures on facebook and they have some sort of software that tells you the names of people in the pictures.

Everything we do online is tracked one way or another. I can look up one thing and see similar adds immediately there after.

When I search and check prices on certain items I start to get adds for similar items too but the thing that really bothers me is while it tends to stop after a week or so 6 months from now an add for that Item will show up out of the blue. This means they're keeping long term records of every odd ball search.

I could never imagine all the data stored somewhere. Keeping tabs on everyone, that is a lot of info.